Google math can be tricky. We’ve undertaken a comprehensive review strategy for our firm and we’ve reached out to current clients and asked if they would provide a Google Review. We’re making this a part of our post-launch checklist as a way for our clientele to provide prospects with candid and open reviews about their experience with us. We don’t write the reviews for our clients and instead ask them to leave the review they best feel fits our level of service.

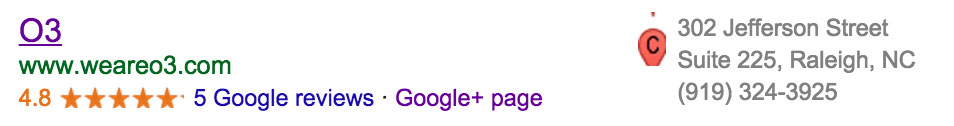

After undertaking this strategy for a week, we accumulated five total reviews. Prior to our fifth review, Google would note that we had reviews but did not provide an underlying star rating. Our best guess as to why this happens is to minimize outliers. When we received our fifth review, Google gave us a 4.8 total rating on our account.

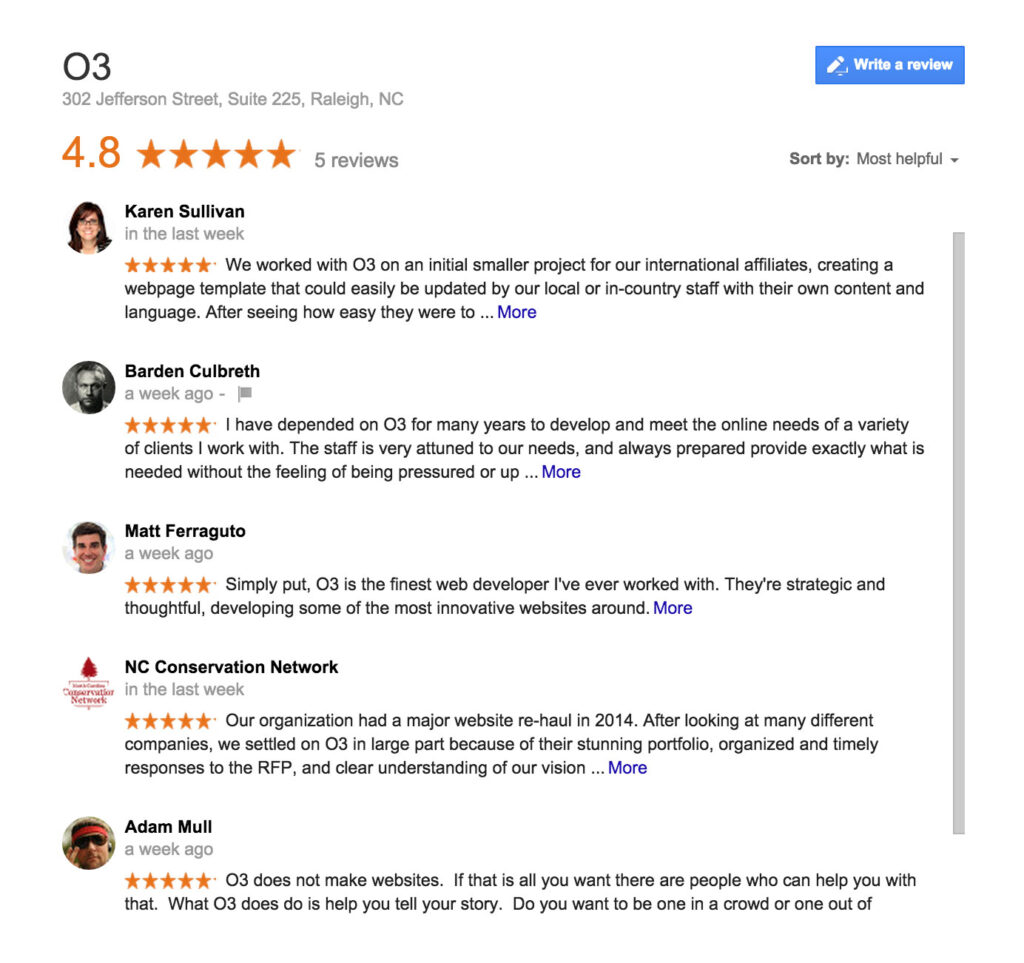

Once we saw our 4.8, we thought we had received four 5-star reviews and one 4-star review (totaling 4.8). After checking the reviews, we had actually received five 5-star reviews. Bewildered, we started poking around.

So how does Google get a 4.8 out of five 5-star reviews? Google gives very few details about how they go about concocting their secret sauce, but it appears they’re using a Bayesian average to calculate their averages.

Bayesian Averages and Google Ratings

In a nutshell, a Bayesian average uses other existing information other than a strict interpretation of means to formulate an average in an attempt to minimize outliers on relatively small data sets. This makes some sense but Google gives no verified methodology on their Bayesian averages. We thought that Google may be using 3rd party reviews from services like Facebook or Yelp to determine their star rating. After a quick search, we found this not to be true as we have all 5-star ratings across these services as well.

Our best guess as to what’s happening is that Google sees our relatively small sample size of five as a risk to determining an actual valid rating. Instead of using the statistical data on the table, they’re using an alternative method to determine our ranking while the sample size is small.

We’ve verified this with other rankings across the web. It seems that five 5-star ratings will garner a 4.8, while the seventh 5-star rating will notch a 4.9 and the tenth 5-star rating is when Google will use a strict mean definition to provide a 5.

Confusing and Conflicting Information

The issue we have with a Bayesian average in this approach is that it’s ultimately confusing to an end user. A person looking to vet a firm may see a 4.8 and think it’s a perfectly suitable and attractive rating. They may click further to read these reviews only to discover five 5-star reviews, yet a 4.8 star rating. This could lead some to think that the firm in question is doing something nefarious to hide unattractive reviews because the math simply doesn’t work out.

We wouldn’t have as big of an issue with this if information were disclosed to the user describing the Bayesian average and why five 5-star reviews yields a 4.8. But nothing like that exists and the end user is left to open speculation as to how those reviews could yield a 4.8 and ultimately coming to incorrect conclusions.

Risks To Review Campaigns

There are implications to review campaigns knowing that Google uses an undefined Bayesian average to determine star ratings. In the infancy of these campaigns, it’s best to have a set of 10 ready to go within a few weeks. Space them out and expect that even perfect ratings will yield an imperfect score for the first 9. Once you’re past that, your rating is calculated based on an actual average.

Note that the purpose of reviews is for the end user to provide a candid scope of how that person did business with your company. It’s always best to approach these campaigns with this in mind. You want your results to look and feel organic while also being organic. Large batches of reviews coming in at one time will feel inorganic not just to the end user but also to review services out there.

Take a level headed approach and expect this iffy math for the short term while having a long-term goal of a 3rd party vetted confidence builder in your business.